OPENAI: GRAMMAR CORRECTION AND PARAPHRASING MONSTER

By: Aly Diana

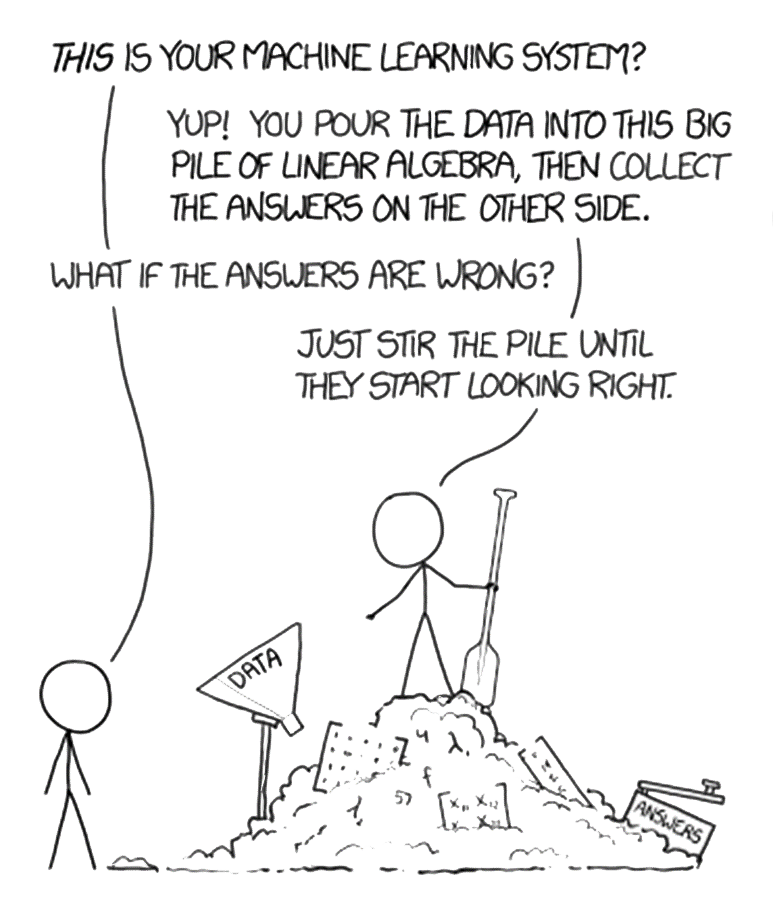

Some of us may or may not have heard about OpenAI, a “thing” that was quite shocking in my opinion. As a disclaimer, I am not tech-savvy, and conversations about machine learning, artificial intelligence (AI), or hardcore coding/modeling hurt my head. The first time I heard about OpenAI, it was introduced as a program to correct my grammar while writing a draft. I was interested, thinking that it might make my life easier. And yes, it’s working (to some extent). In brief, I will give background information on OpenAI, its focus on natural language processing (NLP), and an explanation of GPT-3 and its potential use for grammar corrections and paraphrasing.

OpenAI is a private artificial intelligence research laboratory consisting of the for-profit OpenAI LP and its parent company, the non-profit OpenAI Inc. The company aims to promote and develop friendly AI in a responsible way and has released several widely used AI models, including Generative Pre-trained Transformer 3 (GPT-3), DALL-E and others. OpenAI has a strong focus on NLP, and the company’s research in this area will likely lead to improvements in machine understanding of human language.

NLP involves the use of algorithms, statistical models, and machine learning techniques to process and analyze large amounts of natural language data, such as text and speech. The goal of NLP is to enable computers to understand, interpret, and generate human language in a way that is both useful and effective. Some examples of NLP tasks include but are not limited to: a) Sentiment analysis: determining the emotional tone of a piece of text; b) Machine translation: translating text from one language to another; c) Text summarization: automatically generating a summary of a longer piece of text; d) Speech recognition: converting spoken language into a written text; and e) Dialogue systems: building computer systems that can hold a conversation with a human. Overall, NLP plays a crucial role in enabling computers to understand and process the vast amount of unstructured data that is generated by humans, such as social media posts, customer reviews, and customer support interactions.

OpenAI has developed several models capable of making grammar corrections in English, including GPT-3. GPT-3 is a state-of-the-art language model trained on a massive amount of text data, allowing it to generate high-quality text that is often indistinguishable from the human-written text. GPT-3 can also understand the context and perform a wide range of natural language processing tasks, such as grammar correction. However, it’s important to note that GPT-3 and other models can make mistakes and may only catch some errors. The model’s performance will depend on the quality of the training data and the specific task at hand.

Additionally, while GPT-3 can perform grammar correction well, it’s not always perfect. OpenAI models show promising results in grammar correction and other natural language processing tasks, but they are not perfect and may not replace human editors in all cases. It’s essential to use them as a tool to assist rather than to rely on them fully.

I believe that we may have many questions. It’s probably also a good way to test the OpenAI by asking our burning questions and then evaluating the responses. OpenAI has some sub-specializations which can be seen on its website: https://openai.com/. The one that I have used is ChatGPT: https://chat.openai.com/chat. Happy exploring! FYI, sometimes the site was overloaded and showed error messages. Another thing, we need to log in to use the service.

References

- ChatGPT: https://chat.openai.com/chat

- Else H., Abstracts written by ChatGPT fool scientists. https://www.nature.com/articles/d41586-023-00056-7

- Stokel-Walker. AI bot ChatGPT writes smart es-says — should professors worry? https://www.nature.com/articles/d41586-022-04397-7

- Kung TH et al. Performance of ChatGPT on US-MLE: Potential for AI-Assisted Medical Education Using Large Language Models. https://www.medrxiv.org/content/10.1101/2022.12.19.22283643v2.full.pdf

- Chan, A. GPT-3 and InstructGPT: technological dystopianism, utopianism, and “Contextual” perspective in AI ethics and industry. AI Ethics (2022). https://doi.org/10.1007/s43681-022-00148-6

Most Commented